How to Pass Partetags in Complete Multipart Upload

Efficiently Uploading Large Objects to Deject Storage

In Today'due south era where data is the new oil. Whatever sector y'all work in or what interests you, you've almost definitely heard nigh how "data" is irresolute the style nosotros live. Data originates from a diversity of places in an organisation. Thank you to Internet gadgets and social media applications, a tremendous amount of data is at present being generated. This data must be kept safe and easily accessible.

Data Storage refers to how the massive amount of data is stored so that it could be accessed and read by estimator systems at a later stage. When it comes to information storage, the cloud offers a comprehensive answer. It is 1 of the near effective methods for storing big volumes of data.

HOW LARGE CAN BE THE Information Anyhow ?

Let's start by looking at the size of some typical files to go a sense of the magnitudes nosotros'll be working with. You tin can anticipate 1 hour of video to take up around 12 GBs of disk infinite. Now this may not look like an excessive amount of space once you compare them to the scale of laborious data in the industries that may usually exist around ii,000 GBs.

Due to the excessive increase in size of files, storing those files can be an enormous challenge due to the reasons such every bit — Unstable Internet connectedness, Very High Bandwidth requirement, High time consumption and many more.

In this Article we will encompass an finish to end solution for uploading a LARGE object efficiently in one of the nearly popular cloud computing platforms AWS. Amazon Simple Storage Service (S3) is one of the nigh interesting Amazon Web Services' many services.

(Although the technique we are going to discuss here would exist centric to AWS but this tin can be formalised to utilize for other deject service providers likewise).

At present let's talk about how we are gonna upload objects in the s3 bucket.

Well at that place is one naive method which uploads the whole object in a unmarried operation.

s3client.putObject(new PutObjectRequest(s3Bucket, s3Key, file)); But there are some drawbacks with this arroyo while uploading large objects.

Let's take a instance where nosotros accept a file of 100 GB and take a fast internet connectedness with upload speed of 10mbps. The upload will have around 2.5 hours. At present during this time bridge What if there is an interruption in the network, or our server crashes, or our service restarts ?

Practice we have to outset the upload process once again :/

Likewise this, AWS itself does not support the upload of objects larger than v GB in a single PUT operation.

…ENTERS MULTIPART UPLOAD API…

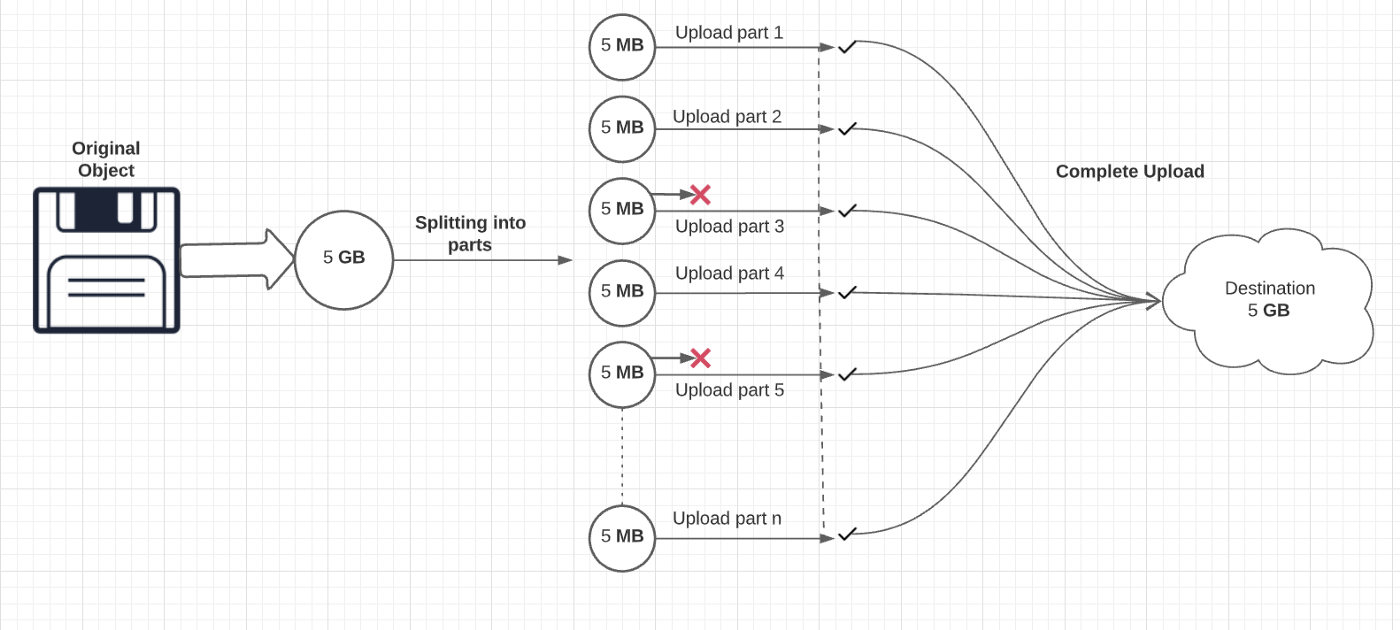

The Multipart Upload API is designed to improve the upload experience for larger objects, which can be uploaded in parts, independently, in whatever order, and in parallel.

Well theoretically equally well as practically, this is an astonishing innovation by AWS.

How does this API work ?

This process involves 3 simple steps:

1. Initiates a multipart upload using the AmazonS3Client.initiateMultipartUpload() method, and passes in an InitiateMultipartUploadRequest object and saves the upload ID that the method returns.

// Initiate the multipart upload.InitiateMultipartUploadRequest initRequest = new InitiateMultipartUploadRequest(bucketName, keyName);

InitiateMultipartUploadResult initResponse = s3Client.initiateMultipartUpload(initRequest); ii. Separate the unmarried object into multiple parts and upload each role accompanied past the upload id and a part number. For each part, one can call the AmazonS3Client.uploadPart() method. Provide role upload information using an UploadPartRequest object.

long filePosition = 0;

for (int i = 1; filePosition < contentLength; i++) { //Considering the last part could exist less than 5 MB, adjust the part size equally needed. partSize = Math.min(partSize, (contentLength — filePosition)); //Create the request to upload a part. UploadPartRequest uploadRequest = new UploadPartRequest()

.withBucketName(bucketName)

.withKey(keyName)

.withUploadId(initResponse.getUploadId())

.withPartNumber(i)

.withFileOffset(filePosition)

.withFile(file)

.withPartSize(partSize); // Upload the part and add the response's ETag to our list.

UploadPartResult uploadResult = s3Client.uploadPart(uploadRequest);

partETags.add together(uploadResult.getPartETag());

filePosition += partSize;

} 3. Finalise the complete upload past providing the upload id and the office number / ETag pairs for each part of the object using AmazonS3Client.completeMultipartUpload() method to consummate the multipart upload.

CompleteMultipartUploadRequest compRequest = new CompleteMultipartUploadRequest(bucketName, keyName, initResponse.getUploadId(), partETags);s3Client.completeMultipartUpload(compRequest); Lawmaking source: AWS Official Documentation

Too Multipart Upload allows you to upload a unmarried object every bit a ready of parts. Later all parts of your object are uploaded, Amazon S3 then presents the data equally a single object. With this characteristic you can create parallel uploads, interruption and resume an object upload, and begin uploads before you know the full object size.

Advantages of MultiPart Upload:

- Yous can upload parts in parallel to improve functioning.

- You can upload an object while information technology is beingness created.

Well, This Approach helps usa to upload the object efficiently.

Simply it all the same does not solve the problem of object creation. It could happen that the problems we were facing in the uploading process can also occur in the creation of objects. At that place is even so some telescopic of parallelisation in this process.

How tin can we amend this?

(SPOILER: We ARE GOING TO Employ THE CONCEPT OF POPULAR OLD-SCHOOL APPROACH — DIVIDE & CONQUER)

Allow's say we besides create the whole object in parts…

Now theoretically what we desire to practice here is that, nosotros desire to create a small part of the whole object and every fourth dimension nosotros create that pocket-size object, we upload that part past using s3client.uploadPart and the small object and then will be saved at the server'south end. So this reduces the trouble caused by any network or bandwidth related interruptions during the creation of the object equally well.

For this to happen we crave just 2 edifice blocks:

1. The Commencement is of grade the Scheduler for creating the function of that object

ii. And Secondly we need a bucket or a table or annihilation where nosotros tin shop, alter and extract the ingredients required for the to a higher place multipart upload such equally partETags, uploadId and partNumber.

The overall procedure here will be the same as the above MultiPart upload, we are just storing the ingredients of partUpload as a metadata.

Now Let'south see how it will look in action:

Below is a very much self-explanatory implementation in Java-

- Hither we are initiating the multipart upload simply for the 1st office and using this uploadId for the whole multipart upload.

//Initialise the partNumber in the tabular array as 1// Extract the partNumber from the dynamic table

Integer partNo = extractFromTable(PART_NO_STORED_IN_TABLE);

if(partNo == 1){

InitiateMultipartUploadRequest initRequest = new InitiateMultipartUploadRequest(

bucketName,

keyName);

InitiateMultipartUploadResult initResponse = s3client.initiateMultipartUpload(initRequest);storeTableContent(UPLOAD_ID, initResponse.getUploadId());

}

//Merely for the 1st role we take to save the uploadId from initResponse and utilise this uploadId for uploading all the parts - At present upload the function with the credentials like (bucketName, keyName, upload Id, part No, file Length and file).

Call back that every part except the last office should be greater than five MB, otherwise you lot'll get the error "Request entity is too small.."

//Uploading the role that we have created

Cord uploadId = extractFromTable(UPLOAD_ID);

UploadPartRequest uploadRequest = new UploadPartRequest()

.withBucketName(bucketName)

.withKey(keyName)

.withUploadId(uploadId)

.withPartNumber(partNo)

.withPartSize(file.length())

.withFile(file);

UploadPartResult uploadResult = s3client.uploadPart(uploadRequest); - Splitting the partEtag into its 2 components i.eastward: partNumber and eTag and storing information technology as a Map in the Dynamic Table.

Map<Integer, String> partETagsMap = extractFromTable(PART_E_TAGS_STORED_IN_TABLE);PartETag partETag = uploadResult.getPartETag();Cord etag = partETag.getETag();

Integer partNumber = partETag.getPartNumber();partETagsMap.put(partNumber, etag);

addTableContent(PART_E_TAGS_STORED_IN_TABLE, partEtagsMap); - Now if we reach the concluding part of the object then we have to complete upload the object using the above credentials.

List<PartETag> partETags = new ArrayList<PartETag>();

for(Integer part: partETagsMap.keySet()){

PartETag currentPartETag = new PartETag(part, partETagsMap.get(role));

partETags.add(currentPartETag);

}

CompleteMultipartUploadRequest compRequest = new CompleteMultipartUploadRequest(bucketName, keyName, uploadId, partETags);

s3client.completeMultipartUpload(compRequest); Benefits of this technique:

- You can create parts likewise as upload parts in parallel to ameliorate throughput.

- Information technology reduces the probability of restarting the procedure of create and upload very much as it creates and uploads parts of size around 5 MB every time.

That's it! Now every time our scheduler runs, it creates a minor object, uploads information technology, and ultimately uploads the whole object. Now to verify, we tin become and cheque our s3 saucepan.

All in all, nosotros take achieved maximum parallelization in this process of creating and uploading large objects in amazon s3. In upcoming blogs, nosotros'll look at how this optimized technique can be extended to other cloud providers as well.

Stay Continued!

everettcareter1994.blogspot.com

Source: https://tech.oyorooms.com/efficiently-uploading-large-objects-to-cloud-storage-8712284b0679

0 Response to "How to Pass Partetags in Complete Multipart Upload"

Post a Comment